As a kid growing up in a sailing-obsessed house, I learned to read radar imagery at a young age. In a rudimentary way, at least. But this didn’t make the tool user-friendly. Mid-1980s radar returns were blob-like affairs displayed on black-and-white screens. Art often needed to supplement science to decipher complex scenes.

I rediscovered this frustration when, decades later, I started covering sonar for Yachting. I remember attending sea trials with sonar experts who could infer massive amounts of information from what—to me—were blob-like affairs, this time on color screens.

Flash forward to the age of AI and increasing third-party integrations, and the days of needing art to supplement science could be limited.

Marine electronics companies have always sought to make their sensor products effective and user-friendly, but recent years have seen the rise of cross-platform integrations to make this equipment even better and easier to use. These integrations have been unfurling between sensor manufacturers and third-party AI companies, and between companies that build complementary hardware and software. Today, there are many examples of integrations across the marine-electronics space.

While radars and sonars can be tricky to read, they do produce massive amounts of data. That means AI can help reduce the cognitive load for operators by rapidly separating signal from noise.

Tocaro Blue’s Proteus products began turning heads a couple years ago, providing vessel captains with clear, smart navigation options that reduce the complexity of navigational charts and radar displays.

Tocaro Blue’s Auto-Focus radar function is a great example of an AI product improving third-party sensing instruments. This function leverages AI machine learning to remove irrelevant targets from a display and to classify returns in one of eight categories, which are represented onscreen by different icons such as small, medium or large vessels, or aids to navigation.

This technology works with all major radar manufacturers’ sensors, effectively turning a display full of radar-return blobs into pictures of real objects.

“Our software, in particular, is focused on X-band marine radar,” says Andrew Rains, Tocaro Blue’s senior sales director. “Our system natively integrates chart and Automatic Identification System data, and fuses it with radar so that you don’t get duplicative information between an AIS target and a radar target, or [between] a charted target and a radar target.”

Additionally, Rains says, the system aligns radar targets with charted targets. This, he says, is quite useful if a chart is inaccurate.

In addition to integrating with radar, Tocaro Blue now works with Sea.AI’s optical-based collision-avoidance systems. “We’re providing radar targets, and their cameras are providing bounding boxes using AI of camera detections,” Rains says. “On their end, they’re doing the fusion between radar and camera.”

Another interesting AI and hardware collaboration is Viam’s Marine AI Platform, which has been integrated with Kongsberg’s Simrad SY50 omnidirectional sonar.

Eliot Horowitz, Viam’s founder and an avid sportfisherman who uses an SY50, says that in this particular integration, Viam’s AI tunes the sonar for range and proper angle while sharpening and clarifying sonar imagery. (Viam integrates with other companies in the marine space, including Viking Yachts, where Viam’s AI-powered robots automate Viking’s fiberglass sanding process.)

“All our AI-focused partnerships make it easier to read and understand other instruments in some way,” Horowitz says. With the SY50 integration, he says, Viam’s AI “translates raw sonar data into actionable insights and allow users to view underwater activity in real time to make informed decisions.”

The result, he says, can help anglers save time and fuel costs, while helping them to identify honey holes.

“With Kongsberg’s SY50, we use AI to combine sonar data with historical, spatial and environmental layers so users can forecast fish migration patterns and monitor marine resources,” Horowitz says. He adds that Viam’s development roadmap involves incorporating information including bathymetric maps, digital elevation models, chart data and environmental factors.

While AI can often improve third-party instruments, two pieces of integrated third-party hardware can also improve the boating experience, especially if AI is involved.

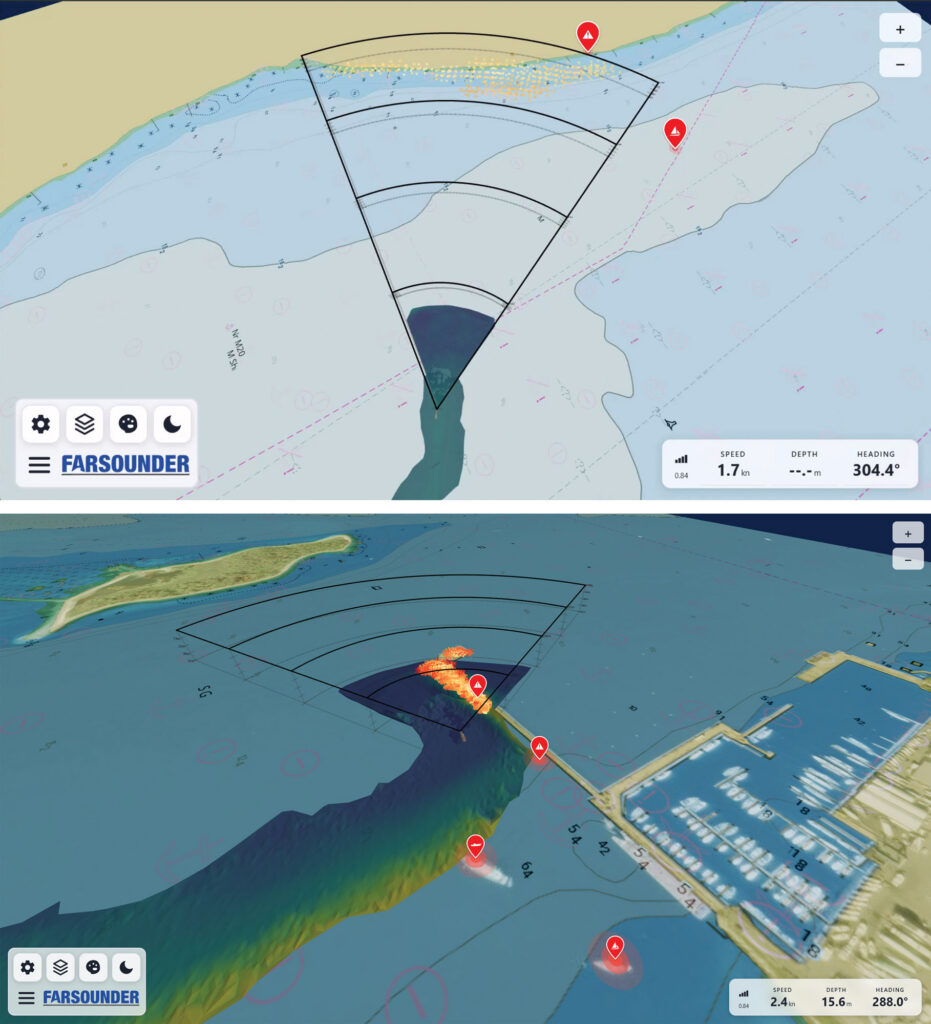

A great example of this involves FarSounder, which makes high-end, forward-looking sonar systems for below-the-waterline obstacle avoidance. The company recently announced its own integration with Sea.AI’s collision-avoidance systems.

“Sea.AI is focused on augmenting the human watchkeeping process above the water, and we see above-water sensors like radars, cameras and so forth as being obvious compliments to below-water sensors like ours,” says Matthew Zimmerman, FarSounder’s co-founder and CEO. “Our integration [delivers] the ability to display information from multiple different sensors and data sets together in an easy-to-use, unified interface.”

While FarSounder builds sophisticated hardware, Zimmerman describes the company as “an obstacle-avoidance company that includes a hardware sensor because nobody else makes that type of sensor.”

If this sounds like a company that also spends considerable time and resources developing software and AI, you’re correct. “We’ve really driven our latest innovations on the software side of things,” Zimmerman says.

One example is FarSounder’s convolutional neural network, which Zimmerman describes as a type of machine-learning algorithm. “We took a whole bunch of raw sonar data, so the returns that are coming in to the sensor, and rather than processing through the traditional processing steps, we trained a neural network to say, ‘Here’s raw data, here’s what the output should be for that raw data, learn how to do that, and then take what you’ve learned and apply that to new sets of raw data that you haven’t seen before and process that similarly’.”

While FarSounder’s convolutional neural network currently allows the company’s equipment to produce classified outputs (such as in-water targets or seafloor), in time, Zimmerman says, it will help users to differentiate things such as wake and other noise from actual targets.

These algorithms also help FarSounder integrate, or fuse, its data with information from other companies’ sensors. Data fusion, Zimmerman says, is “where you’re taking either raw data or partially processed data from multiple sources, in this case multiple sensors, and producing an output that’s more valuable than just the sum of the parts.”

An example is an output that can confirm a target’s existence and position using multiple sensors. “There’s value in saying, ‘There’s a radar or Sea.AI target,’” Zimmerman says. “But there’s even more value in saying, ‘Hey, multiple sensors have seen this target, it all correlates together.’”

This, Zimmerman says, translates to fewer false alarms, greater clarity and—if done right—greater simplicity.

Not surprisingly, given how involved FarSounder, Tocaro Blue and Viam are with third-party collaborations and integrations, all three see a bright future for cross-company development. Getting there, however, could take a minute.

“I think we’re going to be playing a very similar game to what’s happening right now a year from now,” Rains says, noting that next-generation sensors and cartography will also play a role in allowing AI integrations to thrive. “In five years, my hope is that everybody can utilize radar data more easily and make it easier to create automated alerts and smart autopilots, where the vessel will not hit something that … the radar detects.”

Horowitz is similarly optimistic at Viam: “Over the next few years, we expect an evolution from displaying raw sensor data to delivering real guidance. AI will become a real co-captain, highlighting risks, accurately fish-finding and learning from patterns over time.”

Zimmerman, however, sees chutes and ladders. “I think it’s going to move to more complex systems, but—if done well—could result in a clearer and easier to understand operational environment,” he says, referring to helm-side operations.

Zimmerman is also clear that there’s value in systems that have their own sea legs.

“We’re advocating that we can have multiple discrete systems presented nicely together,” Zimmerman says. “But if there’s a failure, like something happens to the Ethernet wire connecting our system to their system, you don’t want the whole boat to go down. You just want to lose that one particular increased capability.”

So, if you’re like me and have struggled to read onscreen imagery, or if supplementing science with art doesn’t come naturally, the odds are excellent that coming integrations of artificial intelligence will help round off boating’s sharp edges.

In-House Integrations

Third-party AI integrations can make marine sensors more user-friendly, but some marine-electronics manufacturers are doing this themselves. Two examples are FLIR’s M460 and M560 thermal-imaging cameras. Each comes with FLIR’s convolutional neural networks that automatically identify and classify camera-detected targets such as buoys and vessels, thus reducing helm-side workloads.